Apple released macOS Monterey 12.1, iOS 15.2 and iPadOS 15.2 on Monday, introducing a previously announced opt-in communication safety feature for Messages that scans photos in order to warn children, but not their parents, when children receive or send photos containing nudity.

MacDailyNews Note: This iMessage feature is not the untenable (delayed, not canceled) backdoor surveillance system introduced via the trojan horse of “detecting child sexual abuse material (CSAM).”

Mark Gurman for Bloomberg News:

Apple had attempted to launch a trio of new features geared toward protecting children earlier this year: the Messages feature, new options in Siri for learning how to report child abuse, and technology that would detect CSAM (child sexual abuse material) in iCloud photos. But the approach drew outcry from privacy experts, and the rollout was delayed.

Now Apple is delivering the first two features in iOS 15.2, and there’s no word when the CSAM detection function will reappear.

The image detection works like this: Child-owned iPhones, iPads and Macs will analyze incoming and outgoing images received and sent through the Messages app to detect nudity. If the system finds a nude image, the picture will appear blurred, and the child will be warned before viewing it. If children attempt to send a nude image, they will also be warned.

In both instances, the child will have the ability to contact a parent through the Messages app about the situation, but parents won’t automatically receive a notification. That’s a change from the initial approach announced earlier this year.

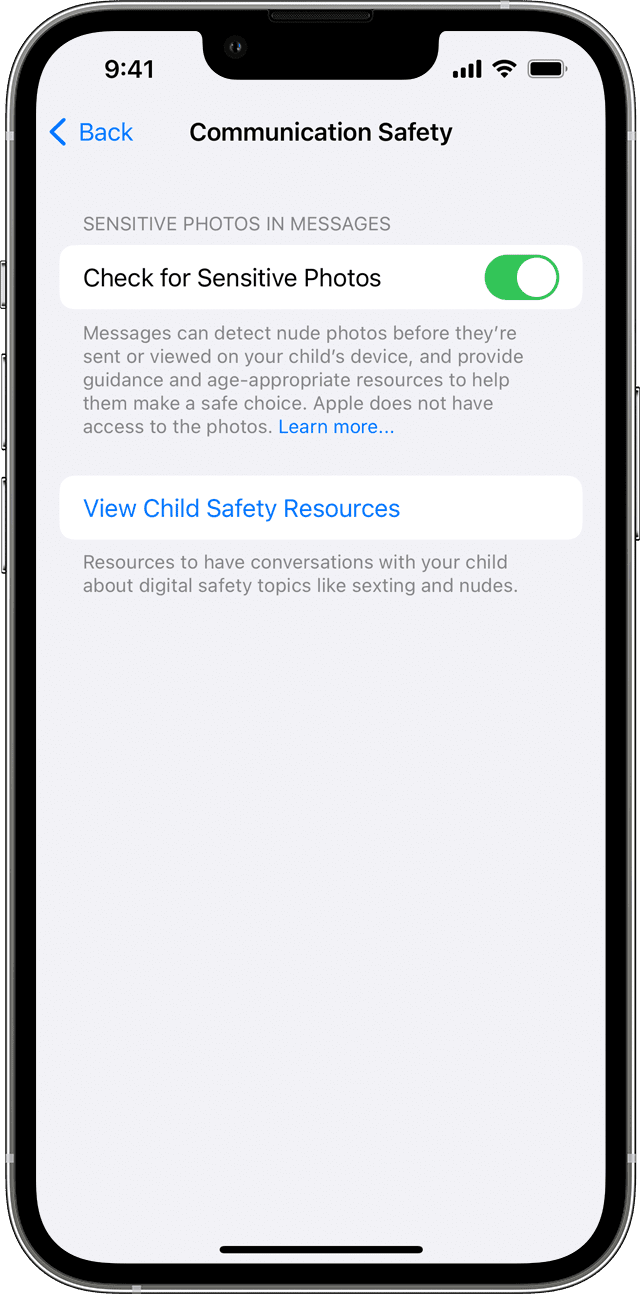

In order for the feature to work, parents need to enable it on a family-sharing account.

Some privacy advocates have panned Apple’s child safety features, saying that the technology could be used by governments to surveil citizens. But the opt-in nature and on-device processing for this feature could quell such concerns—at least for now.

MacDailyNews Take: While the opt-in element for on-device nude image detection is certainly welcome, how much of a leap would it be to enable scanning for any user, not just children, and for content other than nudity?

How much further of a leap would it be for those like Apple who abide by local laws — regardless of the law in question — to be legally forced by governments to send them clandestine notifications whenever “illegal” content is discovered on/by a user’s device?

Imagine China scanning for Winnie-the-Pooh on devices in an effort to weed out critics of Xi Jinping (and/or lovers of anthropomorphic teddy bears with a honey fetish). In 2017, a list of thousands of images, including those depicting Vladimir Putin in full makeup, were outlawed in Russia. Extrapolate.

Further, given Apple’s newfound ability to read text in images and convert that to actual text data, any bastardizations of this innocuous-sounding opt-in on-device Messages photo scanning could quickly become the stuff of dystopian nightmares.

Please help support MacDailyNews. Click or tap here to support our independent tech blog. Thank you!

Bless you MDN for keeping on this.

Not sure I fully understand this. But it seems this scanning is opt in as follows. If you do have family sharing, and some of your family members are kids (not sure how you designate this?), then the feature will turn on and scan. However, if you do not set up family sharing, then this feature will not run.

I guess, I’m ok with this, if you specifically have to designate your kids as kids. Then it makes sense. However, I am not cool with this if merely turning on family sharing makes all accounts susceptible to this. You should have fine grained control as a parent to turn this feature on. Meaning, the parent of the family sharing account has to opt in for it to turn on, on their kids device.

If however, this just is on, if youre a member of a family sharing account, that is total BS scanning.

We need clarification on this.

Yea, until we get clarification on this, I’m not updating.

The more I think about it, the more I dont like it. There should be an opt in checkbox for the parent to turn this feature clearly on or off for their kid. So a check box on the parent account that says “turn this nudity scan on for kid X, but not for kid Y”, and maybe the parent can only turn it on for kids that are minors.

Some explicit checkbox that must be turned on, something like that is how this should be implemented.

I dont like this at all. I wont be updating to this version. At least not without some serious clarification on this showing explicit opt-in is required.

Here is the clarification.

https://support.apple.com/en-us/HT212850

So apple did the right thing here, they gave parents a checkbox to use or not.

You would be ill-advised to trust Apple. Just because they tell you they’re not scanning adults doesn’t mean they’re not.

Encryption messaging such as Signal.org to keep corporations out of your business.

Yes. Keep up the good fight, MDN. I’m with you on this and your general dislike and distrust of Cook.

Apple have always been terrible at developing this kind of thing. Family sharing should be baked into all services. I have Apple TV+ app on my Android tv and no way to only to restrict content to any users.

MDNs addition has long been my question…

Why the focus on this crime and not any others? I get it…children are the button that gives permission to move ahead with a program/policy…and they are the (mostly) defenseless, but all crime is destructive and costly to someone.

Again, why this crime…why stop here? Maybe Apple needs to start the Division of Observation and Crime Deterrence? After all, they “command” one of the largest networks on the planet. In addition to delivering the greatest number of bits and bites, please deliver us safety and peace too.

“….and technology that would detect CSAM (child sexual abuse material) in iCloud photos”

This is not a correct depiction of the initiative and how it works !

Many companies are capable of scanning photos on cloud servers! This is not the issue! The whole controversy revolving around Apple’s approach is that surveillance code/mechanisms will be imbedded locally on ones device

and that the surveillance, detection and flagging of the content would take place on ones device locally !

Not on the iCloud servers!

The only way to disable/circumvent the reporting of on-device flagged images would be through turning off iCloud Photos , The conduit through which locally flagged photos would be reported.

This leaves owners of Apple devices with two choices… both of which break Apples long time marketing and publicity slogans and mantra of

‘Privacy and unparalleled ecosys synergy’ being the the paramount distinguishing features/factors of choosing an Apple device over the competition !’ To circumvent this Surveillance scheme..an Individual is left with a choice of either tuning off the iCloud photos.. hence breaking famed ecosys ..

or breaking user Privacy by allowing this surveillance code to flag and report images through the iCloud Photo conduit.

Make No Mistake !!! This is mass surveillance disguised as virtue… its is PRINCIPALLY WRONG !

And it goes against every grain of their Mantra and what they have promoted to ‘supposedly’ be their

Principals! Principals we were all willing to pay a premium for!

And it will be a stab in the back if all those who believed in Apple promoted principles! .. and a massive dent in Apple’s Integrity!!

Once the genie is out if the bottle….No saying where it will go !!!

If they can look in photos.. they can look into anything.. at their own whim with just a slight modification/tweaking of their embedded surveillance code .. to allow it to also look for other information in ones device … and report activity without the device owners consent or knowledge!

As Dystopian and Authoritarian as it can get!

Incredibly dangerous… and packed with huge potential to be Abused!

For those interested in more details read the following

:

https://www.theverge.com/2021/8/10/22613225/apple-csam-scanning-messages-child-safety-features-privacy-controversy-explained

Scroll down to:

IS APPLE’S NEW SYSTEM DIFFERENT FROM OTHER COMPANIES’ SCANS?

Yet .. it seem Apple is ‘Creeping’ along, a little step at a time towards this horrendous initiative! Hoping it will go unnoticed!

I hope everyone concerned puts an effort to be heard! Loud and Clear!

We die by a thousand paper cuts.

And the real question is why are Apple/Tim willing to compromise Apple’s Integrity at such fundamental level?… for what will be ‘Dead on Arrival ‘ ! .. at least in the realm, Apple, so angelically, claim’s its intended for!

Is it “concessions in return for regulatory exemptions” ???

Etc???

If so… too far over the line..

Too ominous !

Most excellent spot on take and enlightening link.

Everyone BEWARE of BIG BROTHER APPLE now invading our privacy in the holy name of protecting the children and as so many have said, where will it end?

Same BASIC Leftist tactic used by Democrat mayors and Democrat Governors to lock down our lives and restrict freedom of choice with vaccine and mask mandates although differences exist between the two. But the similarity is in the holy name of protecting people a dishonest tactic they know how can you argue against it. Answer: PLENTY.

Upgraded to iOS15.1 and one very creepy feature on the page you pull from left to right is seeing a different photo everyday pulled from Photos album and put on the top of the page. No one from Apple asked for my permission to go through my photo album and post them AT WILL on my home screen… Shameful.

Apple surveillance needs to be STOPPED COLD…PERIOD!

I won’t be updating. Too many Gonads on every Body can be seen quite clearly in the photos I like to take, and I don’t want Apple spying on them.

If you aren’t a minor on a family plan (that purposely activated the feature) why are you so worried?

Obtuse post not looking for an honest answer and sorry your kind, but you’ve been duped. I read the same exact post on two other MDN stories by the same poster…

GoeB’sGonads… teabag time!

Update features on all iOS and Mac OS devices has been turned off until further notice.

Thank goodness! My photos of Gonads on every Brian will stay private. Thanks, Brian!

Just like, FJB…FTC!

MDN: WHY DON’T YOU OFFER A NO AD SUBSCRIPTION SERVICE?????? Blabbing at us with popups asking us to give support to “reduce the number of ads” is bogus nonsense. You have been proposing this for a long time and the number of ads only INCREASES. Does that mean that you are not being truthful about reducing the number of ads based on user contributions? Or does it prove that nobody is falling for that folly? I will NEVER pay based on the notion that my subscription will “reduce the number of advertisements”. I WILL IMMEDIATELY pay a subscription fee for a completely ad free version of your content. WHAT THE FUCK IS KEEPING YOU FROM OFFERING THIS? So many others have requested the same thing. JUST DO IT! What do you possibly have to lose by offering such an option?

They would lose the outrage of outraged readers. Why don’t you try a badblocker?

This is sure to be a hit with lots of markets. Just think of all the pet clothes sales to avoid taking nude pictures of doggies, pussies and goldfish. Not to mention a whole new variety of salad dressing, don’t want any nude photos of those perverted both male and female tulips turning up. They’ll have to do something about Europe too, no more photos of nude statues carved from bare rock.

This is all a prelude to a new era of technology where children will be born clothed, the way they were intended.

Tongue in cheek can now be removed.

If you think this is about child porn you are an idiot. This is a step into spying on people for any means. If your device can be scanned for “child porn” then how long until devices are scanned for anything like guns, political posisitions, anything of personal choice. Yeah, this is totally about porn… NOT

So the minor gets warned, then to view the photo anyway they get warned.

No option to notify the parent that Johny is viewing porn all day?

Useless.

Tim to remove Tim commie Cook and anyone one the board that a leftest commie.